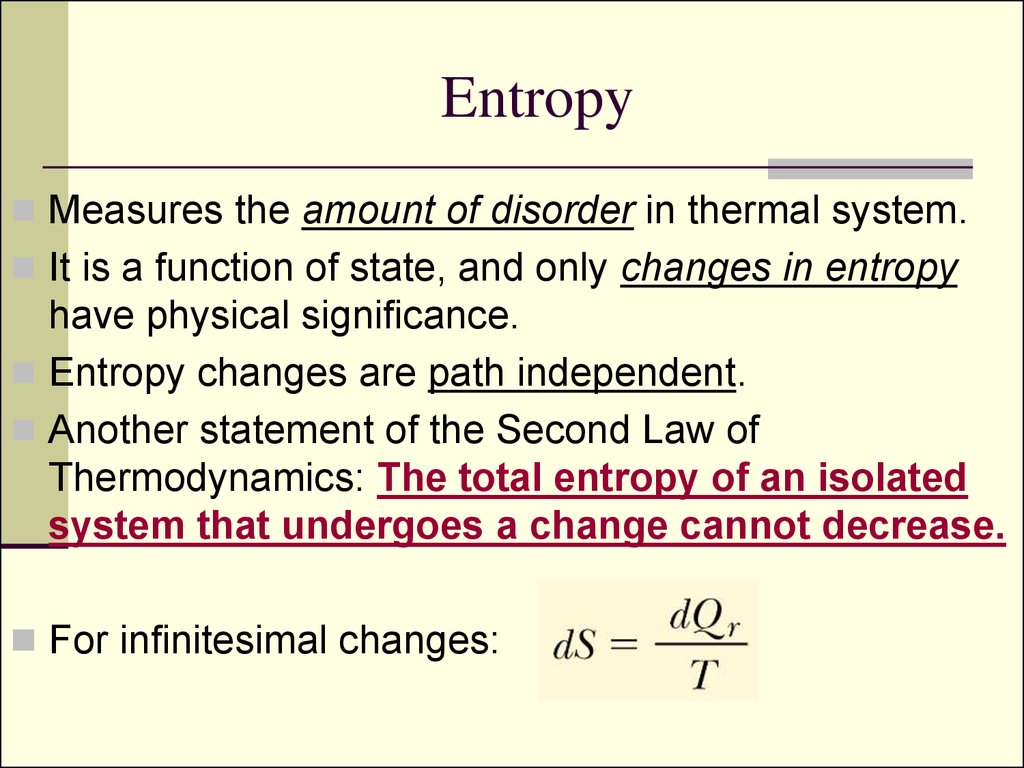

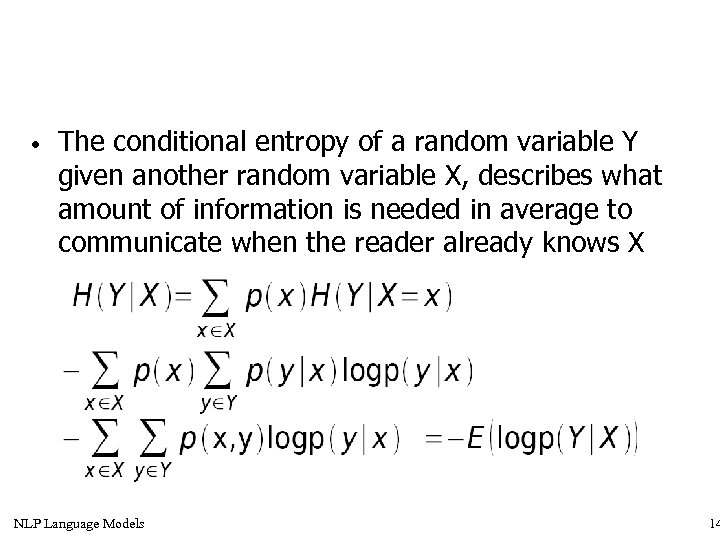

This source is memoryless as it is fresh at each instant of time, without considering the previous values. This source is discrete as it is not considered for a continuous time interval, but at discrete time intervals. Discrete Memoryless SourceĪ source from which the data is being emitted at successive intervals, which is independent of previous values, can be termed as discrete memoryless source. It is denoted by C and is measured in bits per channel use. The maximum average mutual information, in an instant of a signaling interval, when transmitted by a discrete memoryless channel, the probabilities of the rate of maximum reliable transmission of data, can be understood as the channel capacity. Entropy (Information Theory) Alexander Katz, Tejas Suresh, David Holcer, and 1 other contributed In information theory, the major goal is for one person (a transmitter) to convey some message (over a channel) to another person (the receiver ). We have so far discussed mutual information. $$H\left ( x\mid y_k \right ) = \sum_ \right )$$ To know about the uncertainty of the output, after the input is applied, let us consider Conditional Entropy, given that Y = y k (This is assumed before the input is applied) Let the entropy for prior uncertainty be X = H(x) Let us consider a channel whose output is Y and input is X It is denoted by $H(x \mid y)$ Mutual Information The amount of uncertainty remaining about the channel input after observing the channel output, is called as Conditional Entropy. Hence, this is also called as Shannon’s Entropy. Where p i is the probability of the occurrence of character number i from a given stream of characters and b is the base of the algorithm used. Claude Shannon, the “father of the Information Theory”, provided a formula for it as −

When we observe the possibilities of the occurrence of an event, how surprising or uncertain it would be, it means that we are trying to have an idea on the average content of the information from the source of the event.Įntropy can be defined as a measure of the average information content per source symbol. The difference in these conditions help us gain knowledge on the probabilities of the occurrence of events. These three events occur at different times. If the event has occurred, a time back, there is a condition of having some information.

If the event has just occurred, there is a condition of surprise. If the event has not occurred, there is a condition of uncertainty. If we consider an event, there are three conditions of occurrence. Information theory is a mathematical approach to the study of coding of information along with the quantification, storage, and communication of information. \[p(\text\right)\).Information is the source of a communication system, whether it is analog or digital. The probability that it’s raining and I’m wearing a coat is the probability that it is raining, times the probability that I’d wear a coat if it is raining. So, the probability that it is raining and I’m wearing a coat is 25% times 75% which is approximately 19%.

If it is raining, there’s a 75% chance that I’d wear a coat. Sometimes it rains, but mostly there’s sun! Let’s say it’s sunny 75% of the time. As a bonus, these tricks for visualizing probability are pretty useful in and of themselves! We’ll need this later on, and it’s convenient to address now. In fact, many core ideas can be explained completely visually! Visualizing Probability Distributionsīefore we dive into information theory, let’s think about how we can visualize simple probability distributions. I don’t think there’s any reason it should be. Unfortunately, information theory can seem kind of intimidating. These ideas have an enormous variety of applications, from the compression of data, to quantum physics, to machine learning, and vast fields in between. How uncertain am I? How much does knowing the answer to question A tell me about the answer to question B? How similar is one set of beliefs to another? I’ve had informal versions of these ideas since I was a young child, but information theory crystallizes them into precise, powerful ideas. Information theory gives us precise language for describing a lot of things. Information theory is a prime example of this. I especially love when there’s some vague idea that gets formalized into a concrete concept. I love the feeling of having a new way to think about the world.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed